Web Scrape Challenges: Finding 月刊 ヤング マ‚¬‚¸ãƒ³ Articles in Disparate Data

The allure of web scraping is undeniable: the promise of vast amounts of structured data, ready for analysis, market research, or content aggregation. Yet, the reality often diverges sharply from this ideal. For those attempting to extract specific information, such as articles from 月刊 ヤング マ‚¬‚¸ãƒ³ (Gekkan Young Magazine), the journey can quickly become a maze of technical hurdles, unexpected roadblocks, and seemingly empty results. Our recent attempts to locate 月刊 ヤング マ‚¬‚¸ãƒ³ content using web scraping techniques revealed a common, frustrating truth: the desired data is frequently elusive, hidden behind a variety of digital defenses and technical inconsistencies.

This article delves into the primary challenges encountered when trying to scrape specific content, particularly for niche subjects like 月刊 ヤング マ‚¬‚¸ãƒ³ articles. We'll explore why direct content might be missing, the complexities of character encoding, and broader issues that plague even the most sophisticated scraping efforts. Understanding these obstacles is the first step toward building more robust and effective data extraction strategies.

The Elusive Target: Why "月刊 ヤング マ‚¬‚¸ãƒ³" Articles Remain Hidden

One of the most immediate frustrations in web scraping is encountering a complete lack of relevant content, even when you're sure it exists. Our investigations into finding 月刊 ヤング マ‚¬‚¸ãƒ³ articles brought this issue to the forefront. Instead of articles, we frequently encountered "PAGE NOT FOUND" messages, login prompts, or entirely unrelated content. This isn't a failure of the scraper itself, but rather an indication of underlying website structures and content management strategies.

- Page Not Found (404 Errors): A common sight for any scraper, a 404 error indicates that the requested URL does not exist on the server. For archived magazine content like 月刊 ヤング マ‚¬‚¸ãƒ³, this could mean several things:

- The content was genuinely removed or never existed at that specific URL.

- The URL structure has changed, and the old links are no longer valid.

- The content is dynamically loaded and requires specific interactions (e.g., clicking pagination) that a simple HTTP GET request won't trigger.

- Login Walls and Registration Barriers: Many premium content providers, including popular magazines, protect their archives behind registration or subscription walls. What appears to be an empty page to a basic scraper is, in fact, a login prompt. These websites require authenticated sessions, often involving cookies and session management, which adds a significant layer of complexity to scraping. Without proper authentication, the scraper only sees the login page, never the actual 月刊 ヤング マ‚¬‚¸ãƒ³ article content.

- Disparate Data Sources: Sometimes, the content isn't on the expected page at all, or it's embedded within a larger, less relevant document. For example, finding articles might lead to an entire "Unicode Table" instead of the desired text, indicating that the initial search or URL might have been slightly off, pointing to a utility page rather than a content page. This highlights the importance of precise URL targeting and content verification.

Navigating the Labyrinth of Character Encoding and Unicode

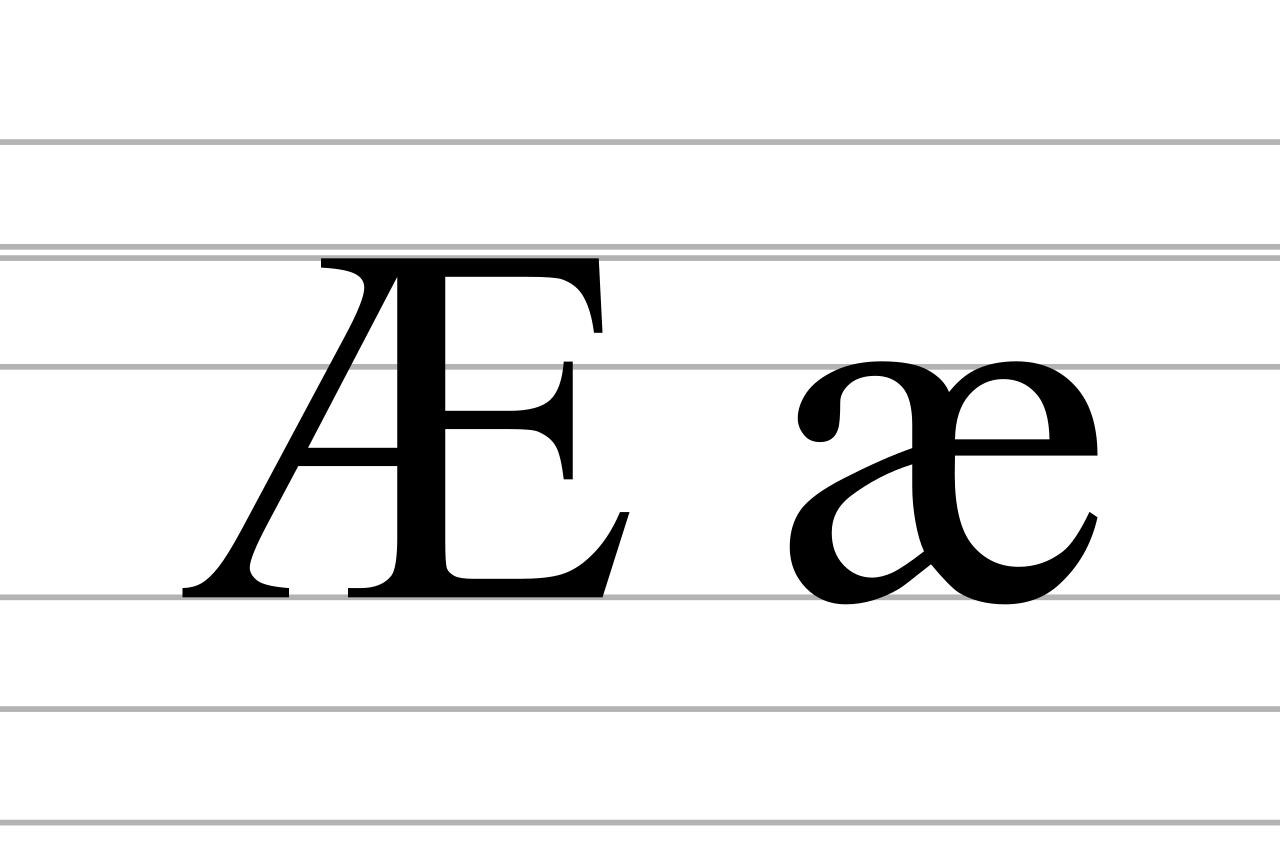

One of the most insidious challenges in scraping international content, especially for languages like Japanese where 月刊 ヤング マ‚¬‚¸ãƒ³ originates, is dealing with character encoding. Our reference context specifically highlighted issues with "special characters such as ü and Ã" and the prevalence of "Unicode Table" information. This is a critical area where data can become corrupted or unreadable if not handled correctly.

Unicode is a universal character encoding standard designed to represent text from all writing systems in the world. For Japanese text like 月刊 ヤング マ‚¬‚¸ãƒ³, UTF-8 is the most common and recommended encoding. However, older websites or specific server configurations might still use legacy encodings such as Shift-JIS or EUC-JP. When a scraper assumes one encoding (e.g., ISO-8859-1, common for Latin alphabets) but the actual content is in another (e.g., UTF-8), characters become garbled. This often manifests as strange symbols (like the ü and à mentioned) where readable text should be.

Practical Tips for Encoding Challenges:

- Detect Encoding: Many scraping libraries can automatically detect the encoding from HTTP headers (

Content-Type). However, sometimes the header is missing or incorrect. In such cases, libraries likechardetin Python can help infer the encoding from the raw bytes. - Specify Encoding Explicitly: If you know the encoding, specify it when decoding the response. For Japanese content, try decoding with 'utf-8', 'shift_jis', or 'euc_jp'.

- Handle BOM (Byte Order Mark): Some UTF-8 files might include a Byte Order Mark, which can sometimes interfere with parsing. Be aware of it and handle it if necessary.

- Validate After Decoding: Always check a sample of the decoded text to ensure it's readable. If you see mojibake (garbled characters), try a different encoding.

Understanding and correctly implementing Unicode handling is paramount for anyone dealing with global data. To learn more about this essential aspect, we recommend Exploring Unicode Tables Amidst 月刊 ヤング マ‚¬‚¸ãƒ³ Content Searches.

Beyond the Basics: Advanced Web Scraping Roadblocks

While missing content and encoding issues are significant, the world of web scraping presents a plethora of other challenges, especially when aiming for consistent and high-quality data extraction for specific content like 月刊 ヤング マ‚¬‚¸ãƒ³ articles.

- Dynamic Content Loading (JavaScript): Modern websites heavily rely on JavaScript to render content. A simple HTTP request often only retrieves the initial HTML shell, with the actual articles, images, or interactive elements loaded subsequently via AJAX calls. Traditional scraping methods (like Python's

requestslibrary) won't execute JavaScript, leaving the scraper with an empty or incomplete page. Solutions involve using headless browsers (e.g., Selenium, Playwright, Puppeteer) that simulate a real browser environment, executing JavaScript before extracting the rendered HTML. - Anti-Scraping Measures: Websites are increasingly sophisticated in detecting and blocking scrapers. These measures include:

- CAPTCHAs: Tools like reCAPTCHA are designed to distinguish human users from bots.

- IP Blocking & Rate Limiting: Too many requests from a single IP address within a short period can lead to temporary or permanent bans.

- User-Agent String Checks: Websites might block requests from common scraper user-agents or require a realistic browser user-agent.

- Honeypots: Hidden links visible only to automated bots, designed to trap and identify scrapers.

- Website Structure Volatility: Even if a scraper works perfectly today, a minor website redesign tomorrow can break it entirely. Changes in CSS classes, HTML tag structures, or element IDs can render XPath or CSS selectors obsolete, requiring constant maintenance and adaptation.

- Data Quality and Interpretation: Even when content is successfully scraped, it might not be in a usable format. Poorly structured HTML, embedded advertisements, or irrelevant navigation elements can clutter the extracted data, requiring significant post-processing and cleaning to isolate the desired 月刊 ヤング マ‚¬‚¸ãƒ³ article text.

- Legal and Ethical Considerations: It's crucial to acknowledge the legal and ethical landscape of web scraping. Always check a website's

robots.txtfile and Terms of Service. Scraping copyrighted material or data that is explicitly stated not to be scraped can lead to legal repercussions. Respect for server load and privacy is paramount.

Strategies for Success: Smarter Scraping for "月刊 ヤング マ‚¬‚¸ãƒ³" and Beyond

Despite these challenges, web scraping remains a powerful tool when approached thoughtfully. Here are strategies to improve your chances of success when searching for content like 月刊 ヤング マ‚¬‚¸ãƒ³ articles:

- Start with Public APIs: Before attempting to scrape, investigate if the website or content provider (e.g., the publisher of 月刊 ヤング マ‚¬‚¸ãƒ³) offers a public API. APIs are designed for data access and are typically more stable, structured, and legally sanctioned than scraping.

- Employ Headless Browsers for Dynamic Content: For JavaScript-heavy sites, invest time in learning tools like Selenium or Playwright. They simulate user interaction, allowing content to render before extraction.

- Implement Robust Error Handling: Your scraper should anticipate and gracefully handle errors like 404s, timeouts, and network issues. Use try-except blocks and logging to track failures.

- Use Proxy Rotation and Delays: To avoid IP bans, use a pool of proxy IP addresses. Introduce random delays between requests to mimic human browsing patterns and reduce server load.

- Maintain Realistic User-Agents: Rotate user-agent strings to appear as different browsers and operating systems, making it harder for anti-scraping systems to identify you as a bot.

- Adopt an Iterative Development Approach: Start with small, focused scraping tasks. Test frequently. Website structures change, so be prepared to adapt and refine your selectors (XPath, CSS).

- Prioritize Data Cleaning and Validation: The scraping process doesn't end with extraction. Dedicate time to cleaning, structuring, and validating your data to ensure its quality and usability.

- Adhere to Ethical Guidelines: Always respect

robots.txt, avoid overloading servers, and be mindful of data privacy and copyright laws.

Conclusion

The quest to find specific content, such as articles from 月刊 ヤング マ‚¬‚¸ãƒ³, through web scraping serves as a microcosm of the broader challenges in data extraction. From encountering "PAGE NOT FOUND" messages and login walls to battling character encoding woes and advanced anti-scraping techniques, the path is rarely straightforward. Success in web scraping demands not just technical proficiency but also patience, adaptability, and a deep understanding of how websites function. By anticipating these hurdles and implementing strategic solutions, data enthusiasts can transform seemingly disparate and elusive data into valuable, actionable insights, making the effort of overcoming these challenges truly worthwhile.